This means I might have to adjust some settings later manually, but that’s okay. So I left both these options unchecked during the installation. I have multiple versions of Python installed on my computer, and don’t want Anaconda to mess with my computer settings. Download Anaconda from its official website Note this distribution will install the Python version 3.9 64-bit version. I prefer to always download from an official website, so here you go: It comes with over 250 pre-installed python libraries, so it’s pretty convenient and we can just use things like pandas or numpy right out of the box. Download & Install AnacondaĪnaconda Distribution is a popular software that includes Python and R programming languages for scientific computing. If you have this problem, this tutorial might help you fix the issue. After clicking on the Anaconda Navigator, a black screen flashes and then disappears immediately. Your comments might help others.My Anaconda doesn’t launch properly after a fresh installation. I have tried my best to layout step-by-step instructions, In case I miss any or you have any issues installing, please comment below. This completes PySpark install in Anaconda, validating PySpark, and running in Jupyter notebook & Spyder IDE. Spark = ('').getOrCreate()ĭf = spark.createDataFrame(data).toDF(*columns) Post install, write the below program and run it by pressing F5 or by selecting a run button from the menu. If you don’t have Spyder on Anaconda, just install it by selecting Install option from navigator. You might get a warning for second command “ WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform” warning, ignore that for now.

Run the below commands to make sure the PySpark is working in Jupyter. If you get pyspark error in jupyter then then run the following commands in the notebook cell to find the PySpark. On Jupyter, each cell is a statement, so you can run each cell independently when there are no dependencies on previous cells.

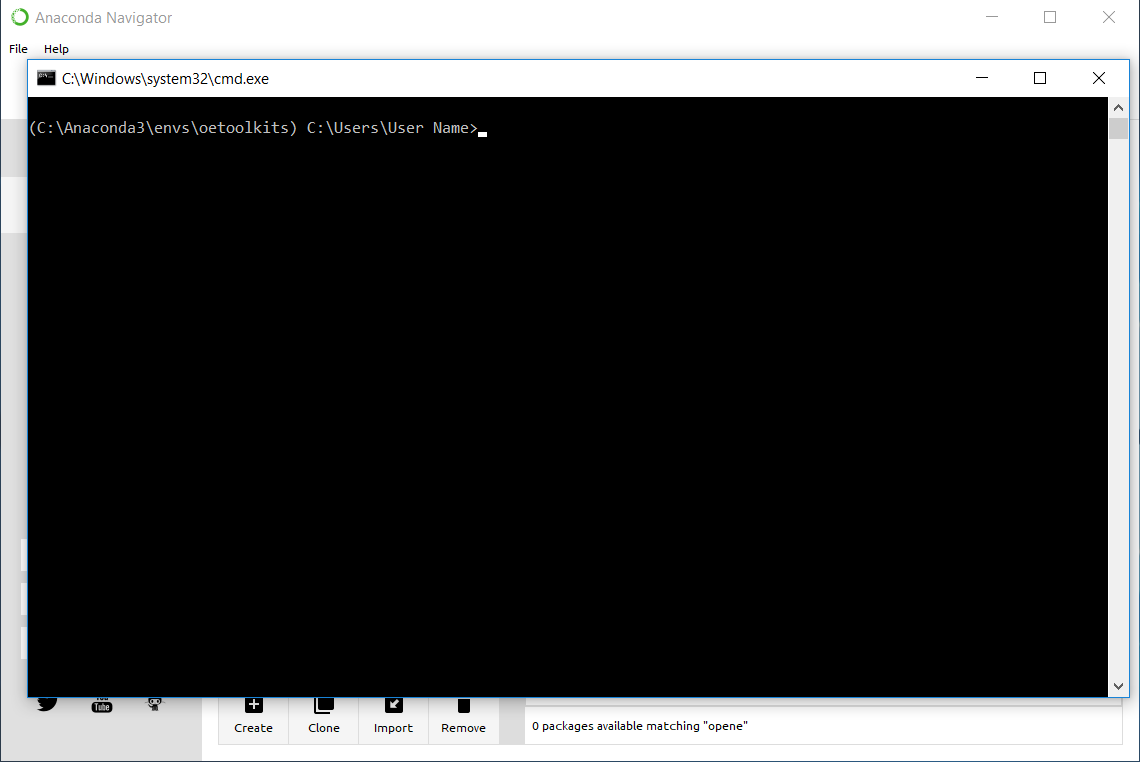

Now select New -> PythonX and enter the below lines and select Run. This opens up Jupyter notebook in the default browser. Post-install, Open Jupyter by selecting Launch button. If you don’t have Jupyter notebook installed on Anaconda, just install it by selecting Install option. Anaconda Navigator is a UI application where you can control the Anaconda packages, environment e.t.c. and for Mac, you can find it from Finder => Applications or from Launchpad.

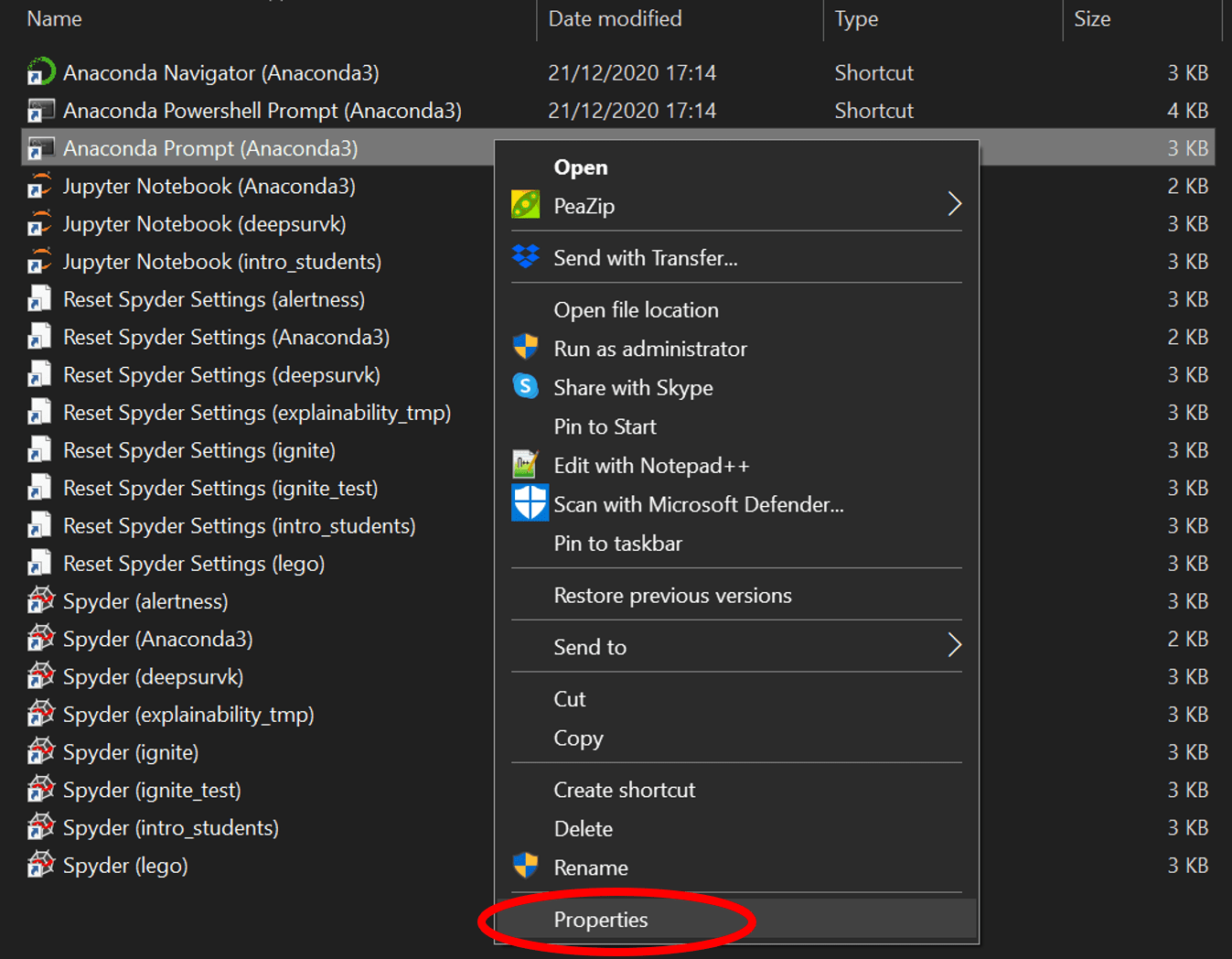

Now open Anaconda Navigator – For windows use the start or by typing Anaconda in search. With the last step, PySpark install is completed in Anaconda and validated the installation by launching PySpark shell and running the sample program now, let’s see how to run a similar PySpark example in Jupyter notebook. Now access from your favorite web browser to access Spark Web UI to monitor your jobs. For more examples on PySpark refer to PySpark Tutorial with Examples. Note that SparkSession 'spark' and SparkContext 'sc' is by default available in PySpark shell.ĭata = Enter the following commands in the PySpark shell in the same order. After launch, I find a console window with the following text inside: C:Program is not recognized as an internal or external command, operable program or batch file. However, that does not work after launching it. Let’s create a PySpark DataFrame with some sample data to validate the installation. After installing Anaconda via the VS Installer, I have a Anaconda Prompt in the start menu.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed